Megabite is a mobile app that automatically turns a photo of your food into a face.

Let’s face it, food is more fun when it looks happy. I was playing with my food one lunchtime, and I wondered to myself – would it be possible to automate this process? Solving the big problems right here.

This was a great opportunity to play with OpenCV on iOS, and see how the iPhone coped with image processing. You can get the source code here.

How does it work?

Megabite works by taking a photo of your plate of food, analysing the image to detect and extract the individual items on the plate, and then rearranging them into something much more interesting.

The app runs through the following steps to achieve this:

Preparing the image

The first step is for the user to take a photo with the app, which is then resized to 1,000 x 1,000 pixels (this size is a good trade-off between length of time to process the image, and output image quality), and then cropped to a circular (plate-shaped) mask:

The input and cropped images.

Detecting & filtering contours

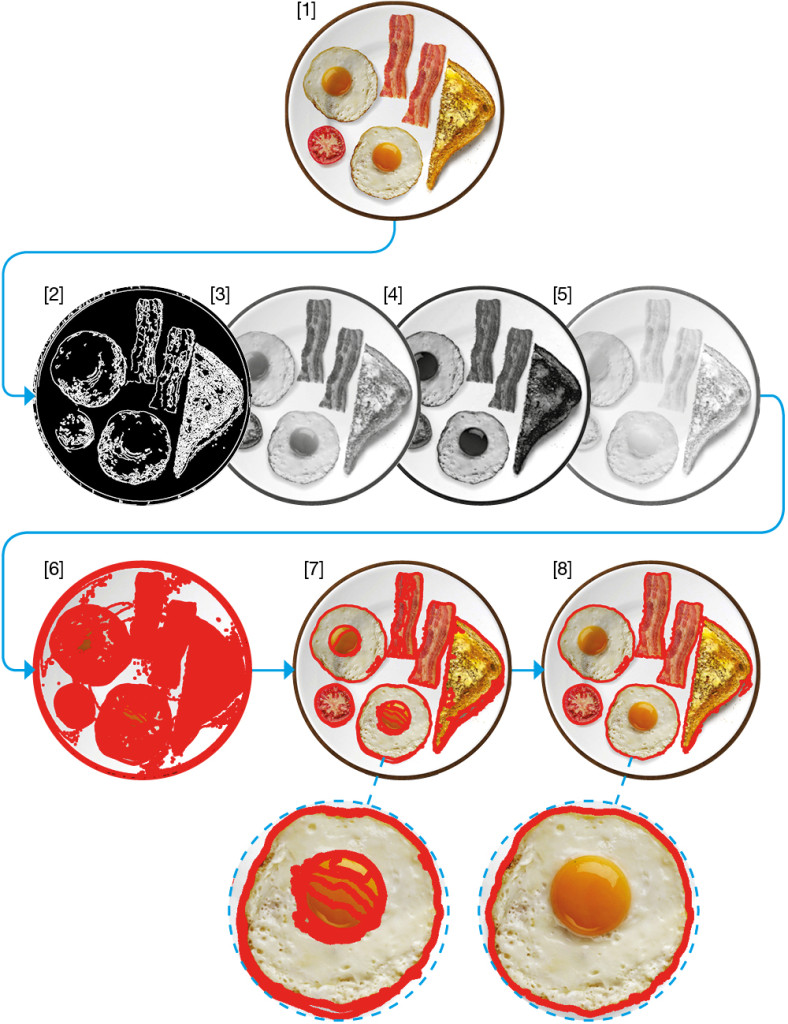

The next step is to identify the individual items on the plate, the contours in the image. To do this, the app performs the following:

- with the cropped image [1], the app applies the Canny edge detector operator [2], and extracts the three colour plane images [3,4,5]

- with the Canny and colour plane images, the OpenCV findContours function is used to extract contours (and hierarchical information) from each image. findContours relies on the algorithm described in “Topological Structural Analysis of Digitized Binary Images by Border Following” by Satoshi Suzuk. In this example, lots of contours are detected and highlighted in red [6]

- next, a first-pass of contour filtering rejects non-convex contours (e.g. open contours like a ‘C’ shape), and contours outside of a sensible surface area (too big/small) [7]

- finally, the second-pass contour filter removes duplicates (matching similar contours based on size and location), and child contours (contours contained within a larger contour), which for example removes the egg yolk contours from within the egg white contour [8].

The process for detecting and filtering contours from the image.

Extracting contours from the image

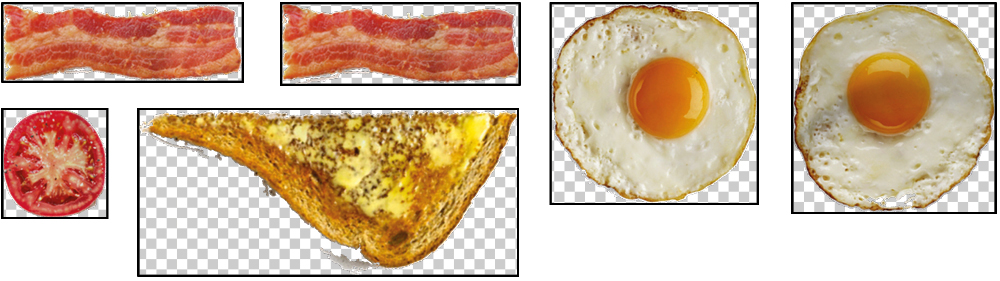

For each of the 6 detected contours (highlighted in red in [8] above), the app then cuts the contour from the source image, and reduces each to their minimum bounding rectangle (the smallest surface area rectangle that contains the maximum extents of the image). It does this by using rotation and surface area calculations shown below in the ridiculous GIF of a spinning slice of toast. Reducing the images to rectangles simplifies some of the later steps in this process (e.g. calculating centroids and surface areas).

Extracted contour, rotated to minimum bounding rectangle (surface area reduced from 196,504 pixels to 172,224 pixels using a rotation of 80 degrees).

Number of pixels for each rotation (red line marks the smallest surface area, at 80 degrees).

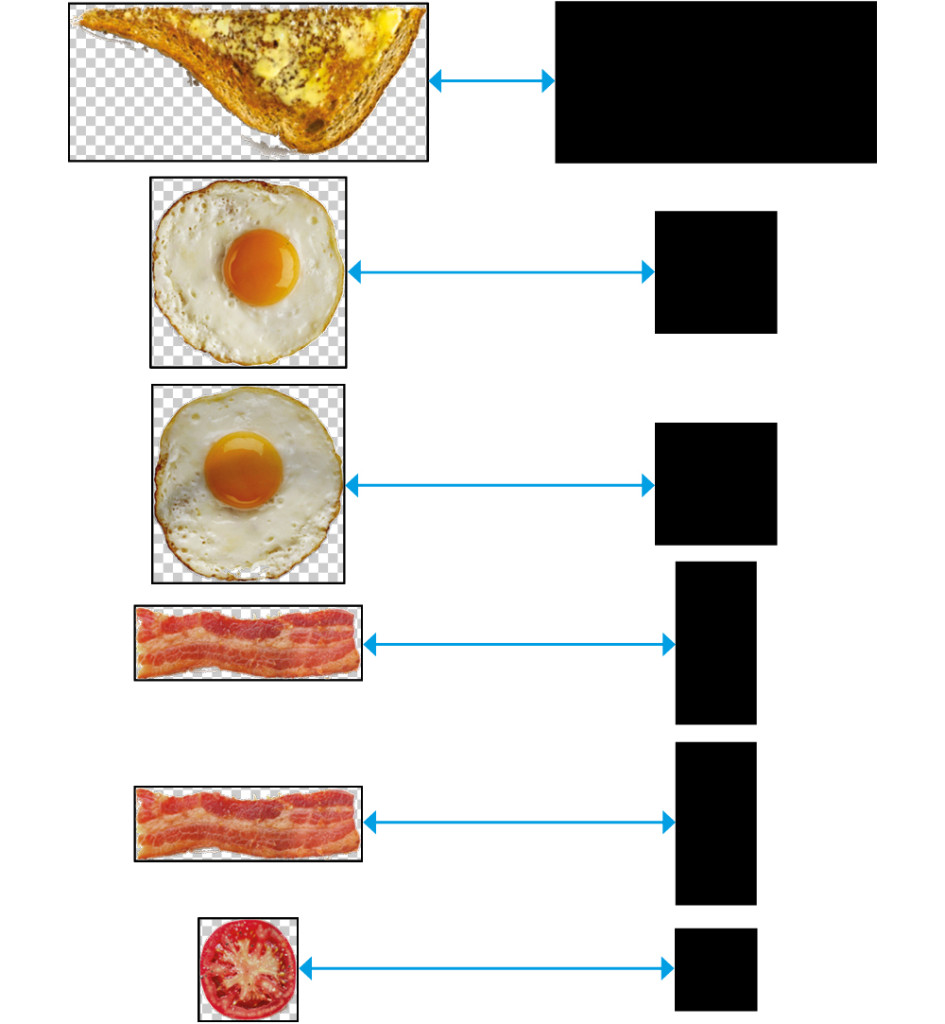

Once this process has run for all 6 contours, we have the following minimum bounding rectangle images:

All extracted contours contained within their minimum bounding rectangles.

Placing extracted images on target template

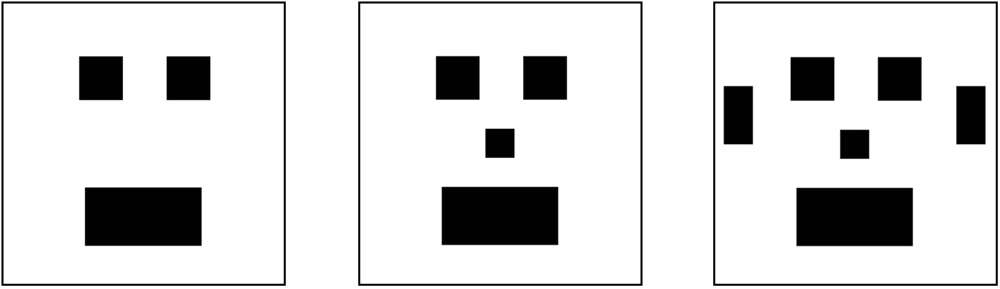

This is where the magic happens. The app has a predefined set of target templates – a group of simple polygons – that can be used as the foundation for arranging the extracted images. For the sake of this demo app, I defined 3 target templates, ranging from 3 to 6 polygons – all representing a face:

The three available target templates.

The app next performs the following steps:

- selects the target template based on the number of extracted images – in this example, we’ve extracted 6 items from the plate, so the most complex target template (which conveniently includes 6 polygons) is selected

- next, the extracted images and template polygons are sorted in order of surface area size, largest to smallest, and paired up

- in surface area size order, the app places the extracted images on the target template polygons, centroid-on-centroid (aligning the points of the centre of mass for each rectangle), and rotates the extracted image, calculating the intersection surface area coverage for each rotation through 180 degrees until the maximum surface area coverage is found

- finally, the app draws each extracted image back to a new empty plate image, at the template polygon location and desired rotation.

Extracted image (bacon) placed centroid-to-centroid with the target polygon (left ear), and rotated through 180° until maximum surface area coverage is found. This is another ridiculous GIF, what am I even doing.

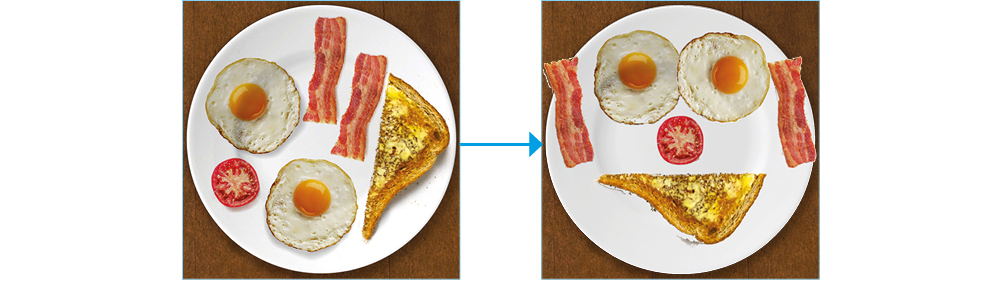

Displaying the results

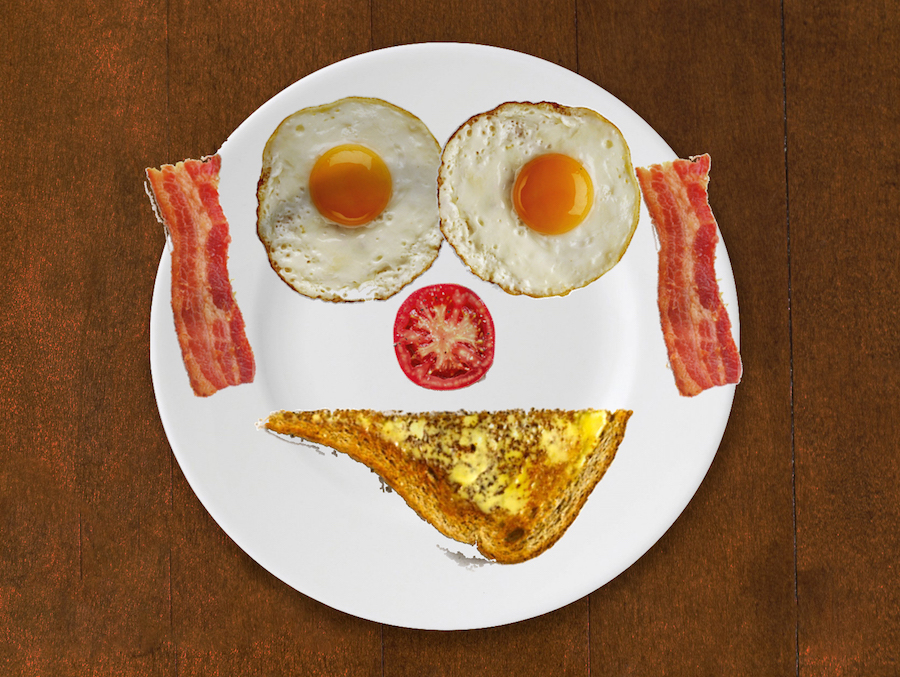

Et voilà! A completely useless picture of food rearranged to make a smiley face. The app makes use of some nice Facebook Pop animations to show the detected items, and rotates and fades from the input image to the output image.

The input and output images.

Limitations

Way too many to list! Building this app was essentially a fun academic task, to see how feasible it was to get even a single sample image to work correctly, and think through the high-level problem. As such, this app will almost definitely not work very well with other images (unless they are as contrived as my demo image is).

If I was to spend more time on this, the key step that would need more work is the contour detection functionality. More needs to be done with the Canny and other edge detection filters, improving the ability to detect overlapping items, etc (there’s a reason why the items are so spread out on the plate in my demo). Google’s Vision API would be perfect for this, but I’ll have to wait until that’s publicly available.

Downloads

The code is available over at the GitHub repo.

it looks you prefer LCHF diet.

This is great – just what I was waiting for… – If you make those faces snapchat-recognizable (so filters can be applied to food), that would be the pinnacle of usefulness. Thx & best wishes, Anja

This is great. Where is the donate button?

Fun and funny article. Way more useful that you (purport) to think! Thanks so much!

Finally! And I absolutely love the very precise bacon-rotation illustration!